Design and Technology

The Design & Technology Team focuses on user-centric design to develop holistic strategies for the specific needs of your custom digital device application.

The Design and Technology (D+T) team creates digital health solutions to improve healthcare delivery. We design user centric solutions in partnership with clinical and research experts, focusing on digital health technology, user experience, clinical practice and scientific research. The team consists of software developers, game designers, multimedia specialists, and project scientists. Our services are broad and cover every stage of development from idea creation to the deployment of new technologies that enhance patients’ everyday lives.

We value collaborative research, and our team supports grant applications and research publications. Our project scientists have been co-investigating a number of projects which received intramural or extramural funding. They have experience in grant writing and have a track record of contributing to digital health research publications and impactful journals.

Our team offers custom web and mobile application development. Our expertise extends to user experience, design, and full stack engineering. We transform ambitious research proposals into functional applications using Unity, Node.js, React.js, SwiftUI, Amazon Web Services, and other emerging and popular technologies.

Examples include:

- Native Mobile Applications

- Expertise in building applications for iOS and Android.

- We leverage the power of the cloud, seamlessly integrating Amazon Web Services (AWS) in our mobile applications.

- Mobile/Desktop Web Applications

- Full stack web application development includes database design and implementation, backend/business logic development, front end design and development, security, and analytics. It is accessible anywhere you have a modern web browser installed.

- Responsive Web Applications

- Responsive web design is a design approach that ensures that web pages display well on a variety of devices and window or screen sizes. Responsive layouts automatically adjust to any device and screen size. Responsive web applications do not replace mobile applications, but in some cases might be ideal in order to meet the needs of mobile and desktop users at the same time.

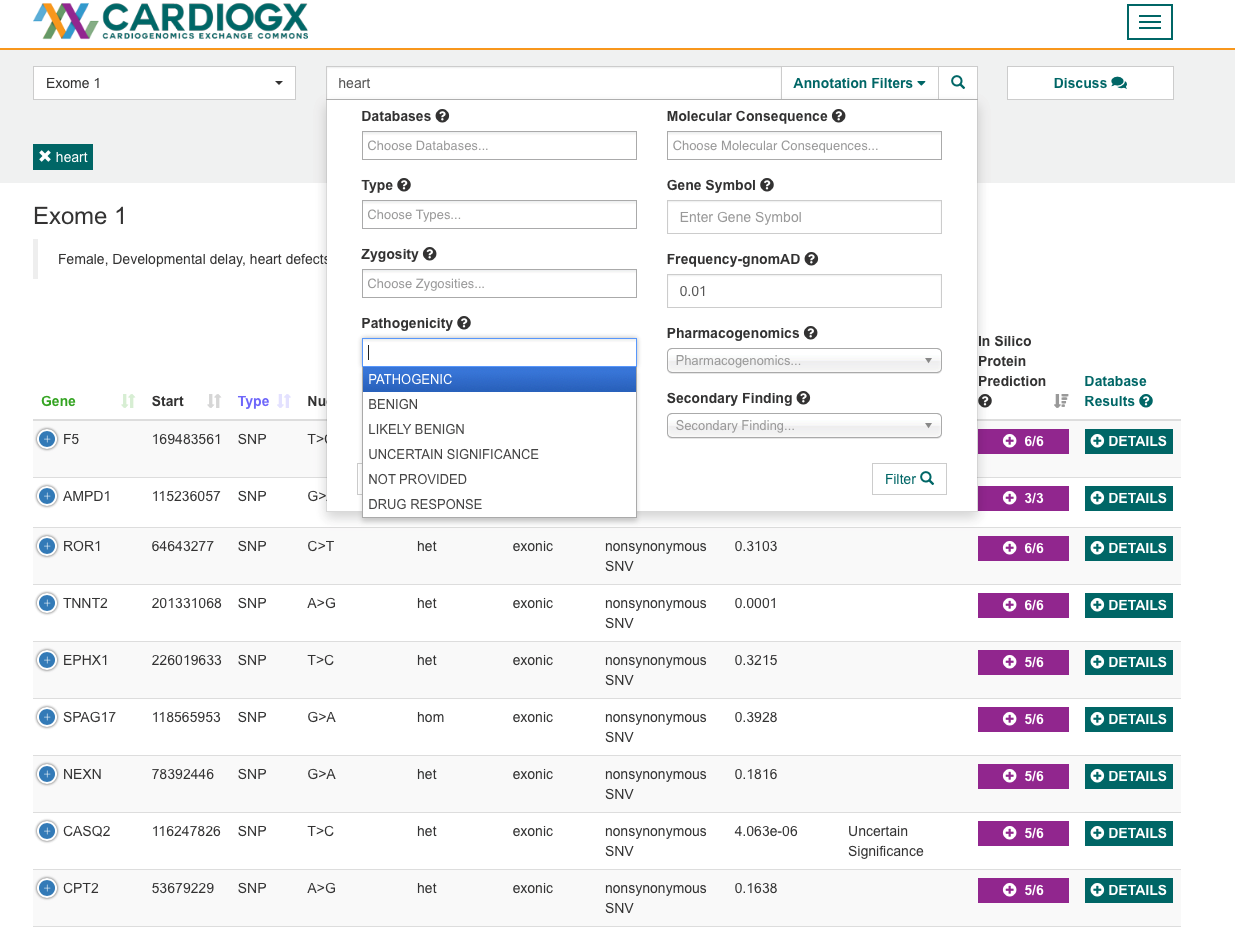

Case Study: Cardio GX

CardioGenomics eXchange commons (CardioGX) is a cloud-based collaboration platform for the analysis and exchange of genome sequencing data. Our team developed the underlying data processing pipeline as well as an intuitive user interface that allows the clinicians to perform searches in real-time.

Game design provides a variety of ways to increase user engagement through visuals, stories, achievements, and more. Each engagement tool creates a different effect, depending on the user. We use a variety of approaches, tailoring our work to address the unique requirements of healthcare applications. We use current technology, such as motion tracking and head mounted displays, to provide one of a kind immersive experiences.

Through each step of the development process, we work closely with our partners to identify and deliver the best solutions for each project's individual goals. We test each application we create to ensure it meets the project’s goals, and is easy and fun for anyone to use.

- Immersion for exposure therapy, distraction interventions, education

- Exploring consumer VR platforms, 360 video and asymmetrical app design

- Consultations on VR hardware, design considerations etc.

- Interventions

- Voxel Bay - virtual reality distraction to alleviate needle-phobia complications

- MRI Simulator - virtual reality experience to reduce anxiety in patients

- Education

- Brain Bump - mobile virtual reality experience to educate kids on concussion symptoms

- MPATHI (Making Professionals Able THrough Immersion) - 360 video and gaze-based input on Oculus Go and mobile phones to educate healthcare providers on the challenges that immigrant families might face when seeking care for their children

- Tools

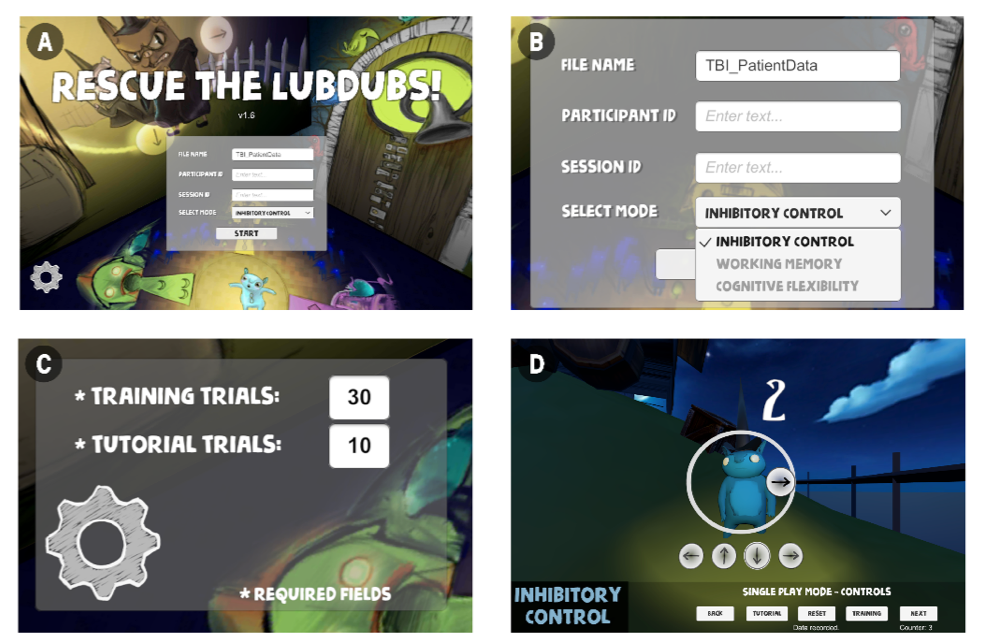

- VICT (Virtual Reality-based Interactive Cognitive Training) - virtual reality gamified TBI tests to measure recovery and assist in rehabilitation

- HearMeRead - tablet based app to assist speech therapists and families with teaching children who have hearing disabilities how to read

Case Study: A Virtual Reality-Based Executive Function Rehabilitation System for Children with Traumatic Brain Injury

We designed three VR games based on proven tasks for executive function assessment to facilitate rehabilitation of inhibitory control, working memory and cognitive flexibility.

The design allows the study team or therapist to record individual progress, and set different goals for each patient.

We value and support research and evidence dissemination, and are often active partners in writing research publications. The design paper for this project published in JMIR Serious Games can be accessed at https://games.jmir.org/2020/3/e16947/.

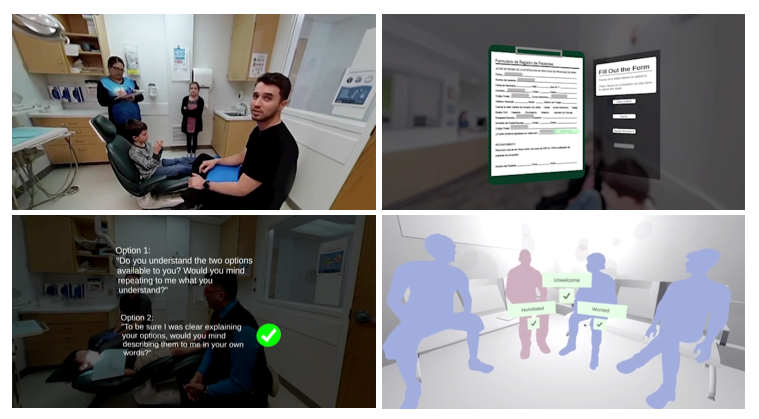

Case Study: MPATHI - An Immersive Virtual Reality-based Empathy Training for Dental Providers

A study sponsored by the Ohio Department Medicaid to increase medicaid providers’ cultural awareness and competency. In contrast to traditional training where the providers may watch someone else’s interaction through scripted scenarios, the VR experience provides a first person patient perspective. In the VR experience, the users are in a Spanish speaking clinic as the parent seeking dental care for their child.

For more details of the simulation, please visit: https://mpathi.nchri.org

Figure. MPATHI consists of 360 degree video recording and virtual scenes, with interactive components controlled by gaze control.

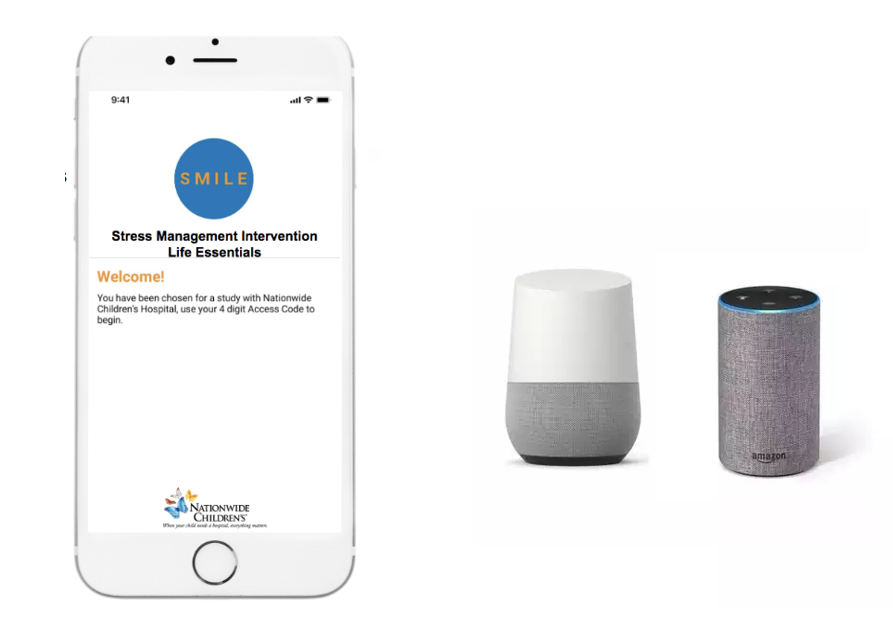

Voice assistants have advanced dramatically in the last decade. What began as simple dictation tools and smartphone command interfaces have developed into highly accurate speech-to-text and text-to-speech services and conversational personal assistants. We research ways to utilize voice technology in healthcare, with the goal of improving patient care. Our team actively explores ways that artificial intelligence can be used to generate personalized content, identify patterns and utilizing human voice to detect health issues. The voice interactive apps and skills we develop and refine are compliant, scalable and interoperable.

- Exploring consumer-facing (Mobile apps, Google Assistant, Siri, Microsoft Cortana, Amazon Alexa Echo devices) and open source research tools (MyCroft, Google Voice kit, Raspberry Pi) in research and development of voice interaction in healthcare communications

- Creating digital health interventions for maternal and infant health

- SMILE project (link to HIMSS blog post). Details are available at https://www.iproc.org/2019/1/e15231/

- SMILE project (link to HIMSS blog post). Details are available at https://www.iproc.org/2019/1/e15231/

- Open source intervention tool for patients (Eg. PHQ9 screening, daily exercise for mindfulness, audio diary, symptom detection through continuous listening, push notifications, and podcasts)

- Video demo (Mycroft SMILE skill screening)

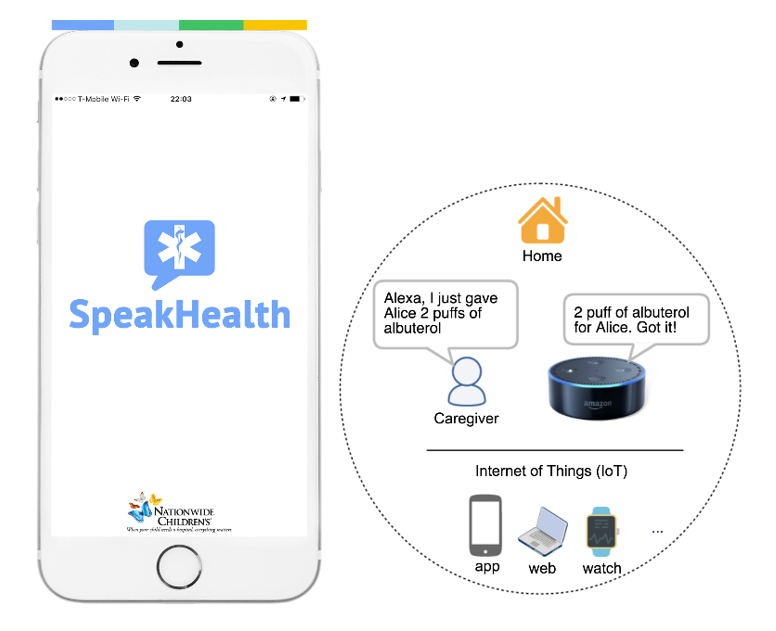

- Enabling voice interactive medical diary and symptom tracking for complex care

- SpeakHealth project (link to HRSA). Details are available at our multi-stakeholder perspective paper https://www.jmir.org/2020/2/e14202/

- SpeakHealth project (link to HRSA). Details are available at our multi-stakeholder perspective paper https://www.jmir.org/2020/2/e14202/

- Use of voice-activated devices in clinical care, charting and navigating EHR

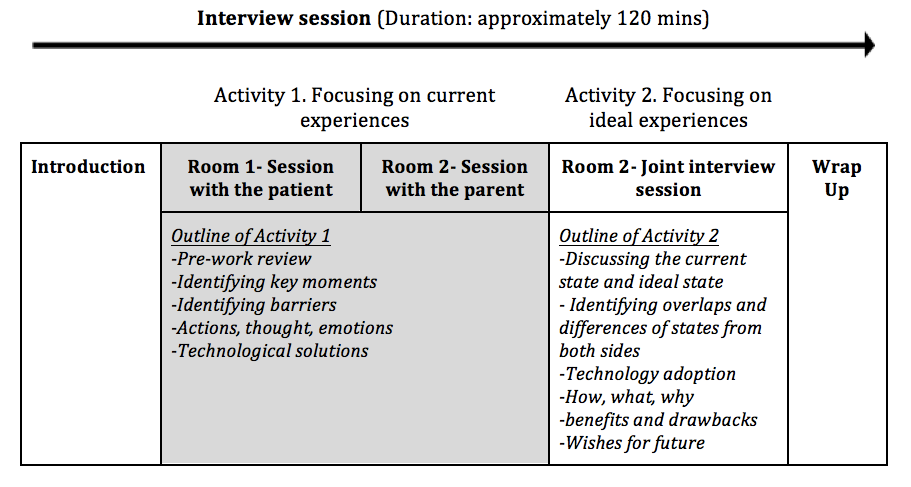

Good User Experience (UX) design requires a deep understanding of the user, what they need, their values, abilities, and limitations. This is paired with a deep understanding of current technologies, which will meet the needs of each user type. Good UX practices improve the quality of the user’s interaction with our research initiatives. They create positive perceptions of each product and any related services. Our design process includes user research, co-design, feasibility and usability testing.

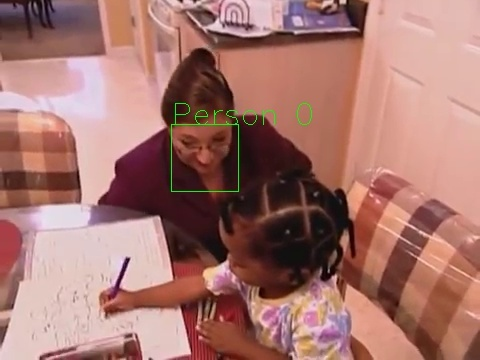

Figure. A co-design session example for understanding technology use by parent and child with chronic conditions (Full study is available at https://www.jmir.org/2018/9/e10285/)

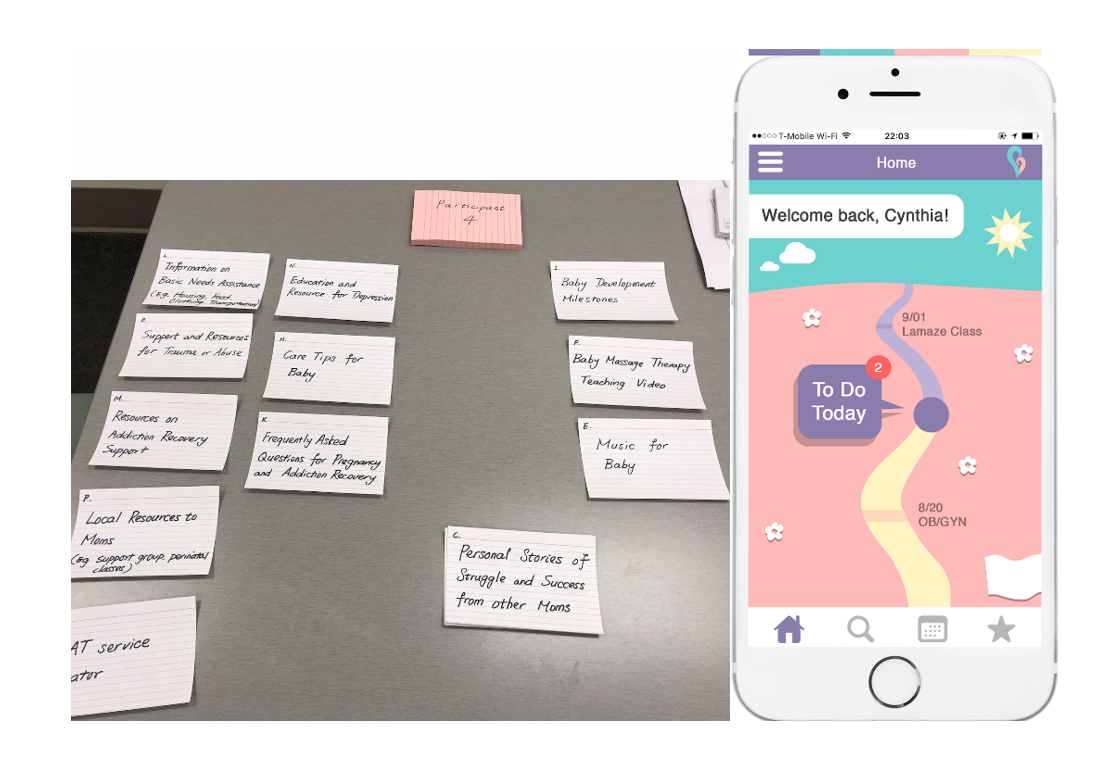

Figure. An example of card sorting with potential users for app functionality design and the resulting Empower Care app. This exercise can identify key features that would be most desired by the users and inform a more effective development process.

Case Study: Symptom Tracker

Symptom Tracker is an application for children and youth with chronic diseases that can be used on Apple products. Symptom Tracker is a user-friendly system for children and youth to monitor their symptoms, interruptions to quality of life, and activities of daily living. It has the ability to communicate these experiences in real time to parents and providers to start a conversation about their care. Using Apple’s face tracking technology, children can share symptoms simply by looking into the camera. This is useful for children with physical limitations.

Increasingly, our electronic devices are capable of continuously collecting measurements of our physiological state such as heart rate, movement, and respiration. Furthermore, devices in our environment can be utilized to collect data using voice, video, and motion data which may be able to assist healthcare providers in monitoring mood, activity, and behavior.

When this data is used to help us understand or predict health outcomes, we refer to the measurements as digital biomarkers.

We provide end-to-end assistance to research studies and clinical interventions beginning with the setting up of wearables and environmental sensors and extending through the recording and analysis of digital biomarkers.

Current Projects:

- Exploring consumer-facing wearables (E.g. Fitbit, Apple Watch) to detect health activities, such as seizures (Please see the link for published results of one of our projects: https://journals.sagepub.com/doi/abs/10.1177/0883073820937515).

- Exploring remote sensors for understanding sleep quality (Please see the link for published results of one of our projects: https://dl.acm.org/doi/10.1145/3360773.3360883)

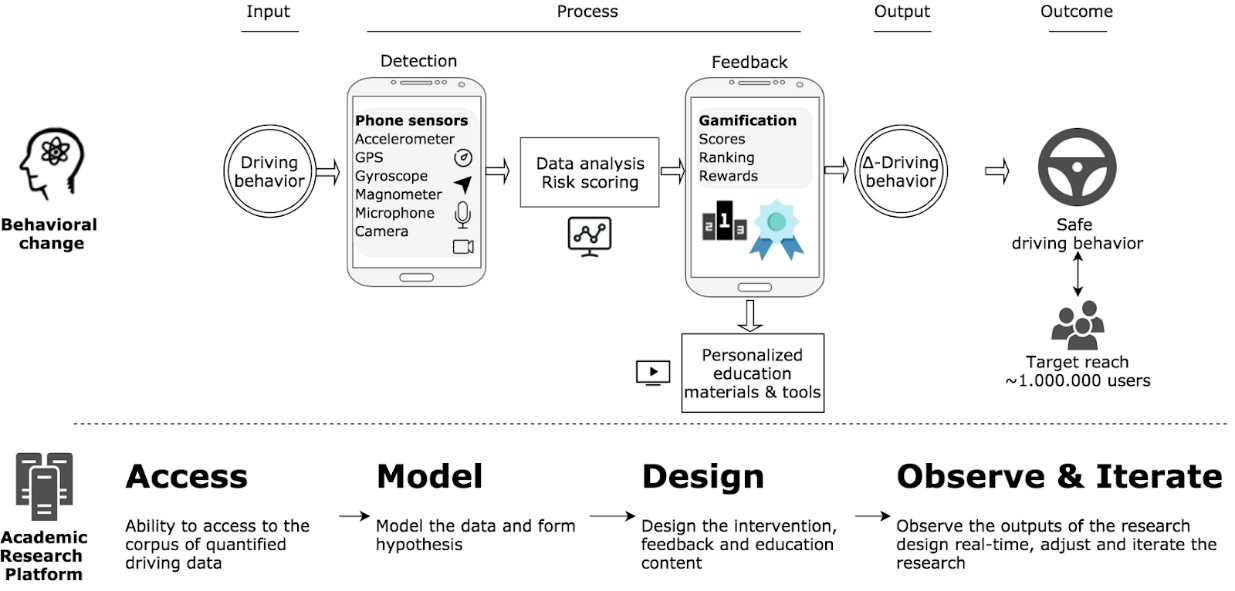

- Leveraging mobile phone sensors to understand driving behavior among teens (Please see the link for published results of one of our projects: https://www.sciencedirect.com/science/article/pii/S2590198220300014)

- Creating voice biomarkers to detect behavioral changes in family interactions (Please see the link for published results of one of our projects: https://formative.jmir.org/2020/6/e18279/)

Today’s AI capabilities would let us analyze each video frame, more than we could do before. By using AWS and Microsoft Azure platforms and open-source video stream analytics tools (e.g. OpenCV, ImageAI, TensorFlow), we are able to recognize and identify significant events in order to improve physical and behavioral health.

Image: Video and motion capture technology examples (from top left to bottom right: AWS Deeplens, Oura ring, Microsoft Azure Kinect, attachable glass camera, smartphone, Raspberry pi computer)

- Identification and detection of individuals and objects, differentiation and labeling utilizing open source AI tools and Microsoft Azure platforms (Azure Kinect)

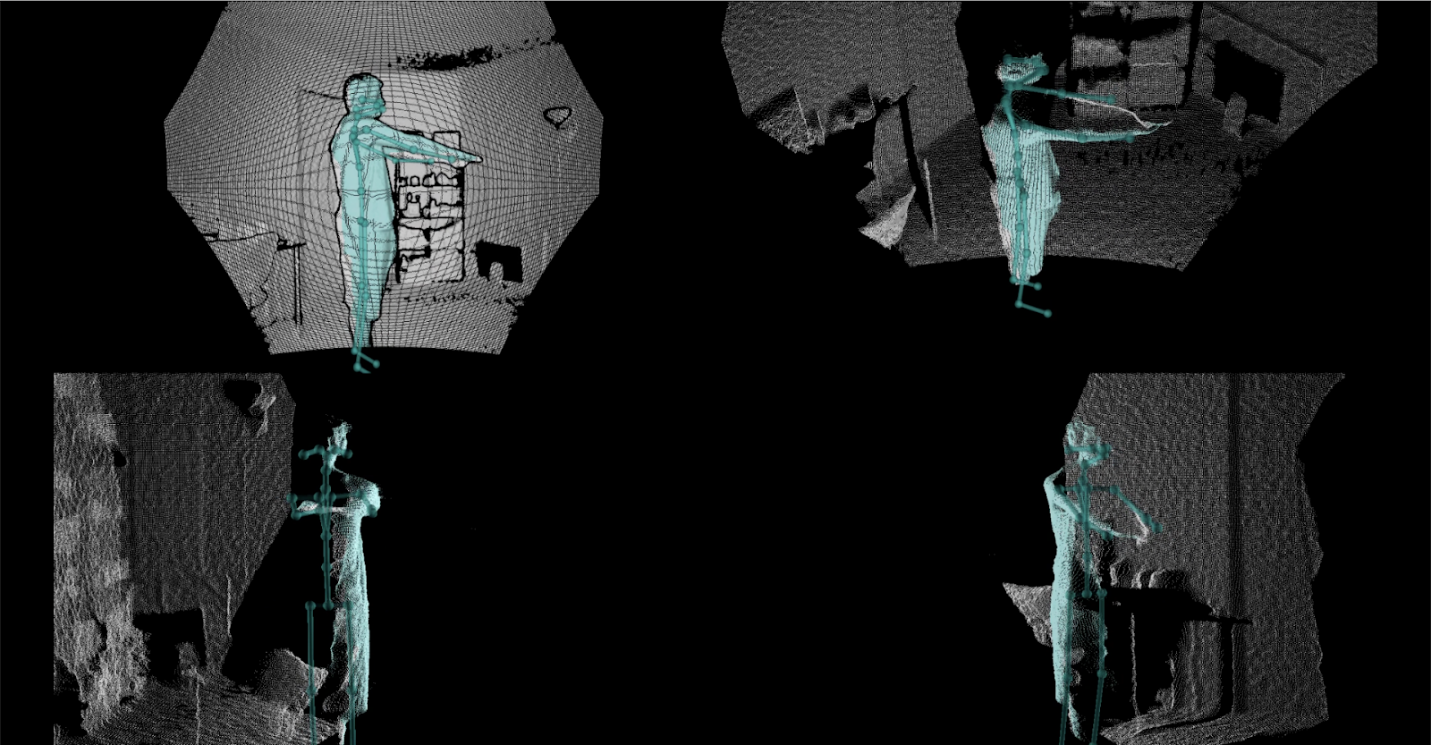

Image: Microsoft Azure Kinect output sample

- Processing real-time video streams to identify communications, emotions and gestures of family members at home

Image: Publicly available videos used for training and testing algorithms (image from SuperNanny video)